We have all heard about the impending Internet of Things (IoT) revolution. As a matter of fact, such talk has been going on for many years. Even as far back as 1966 when German computer scientist Karl Steinbuch said, “In a few decades time, computers will be interwoven into almost every industrial product.” But with Machine-to-Machine (M2M) deployments and several “intelligent” devices already in place... perhaps the IoT revolution is finally upon us. Even so, to a large degree it seems we have adopted an almost passive approach to the design and deployment of IoT networks. We expect bumps in the road as more of these devices are deployed and believe that in the future, best practices will be developed and the devices and networks will become more efficient. While it is certainly true that the technologies will mature over time, we can also take a more proactive approach to the impending IoT revolution and ensure that these systems meet our requirements.

Unfortunately, current system architectures were not designed with the IoT in mind. Not many systems with their current architecture can handle the traffic of a network consisting of millions of devices. Furthermore, when we consider such networks, we must not just think of them as systems consisting of these millions of devices – because with millions of devices - you may very well have billions of packets of data. It is common to think of bandwidth as the primary metric when designing wireless networks and ask the question; “do we have the bandwidth to handle this data?” But as we move to the IoT paradigm, we must take into account much more than bandwidth. Foremost among these factors is latency.

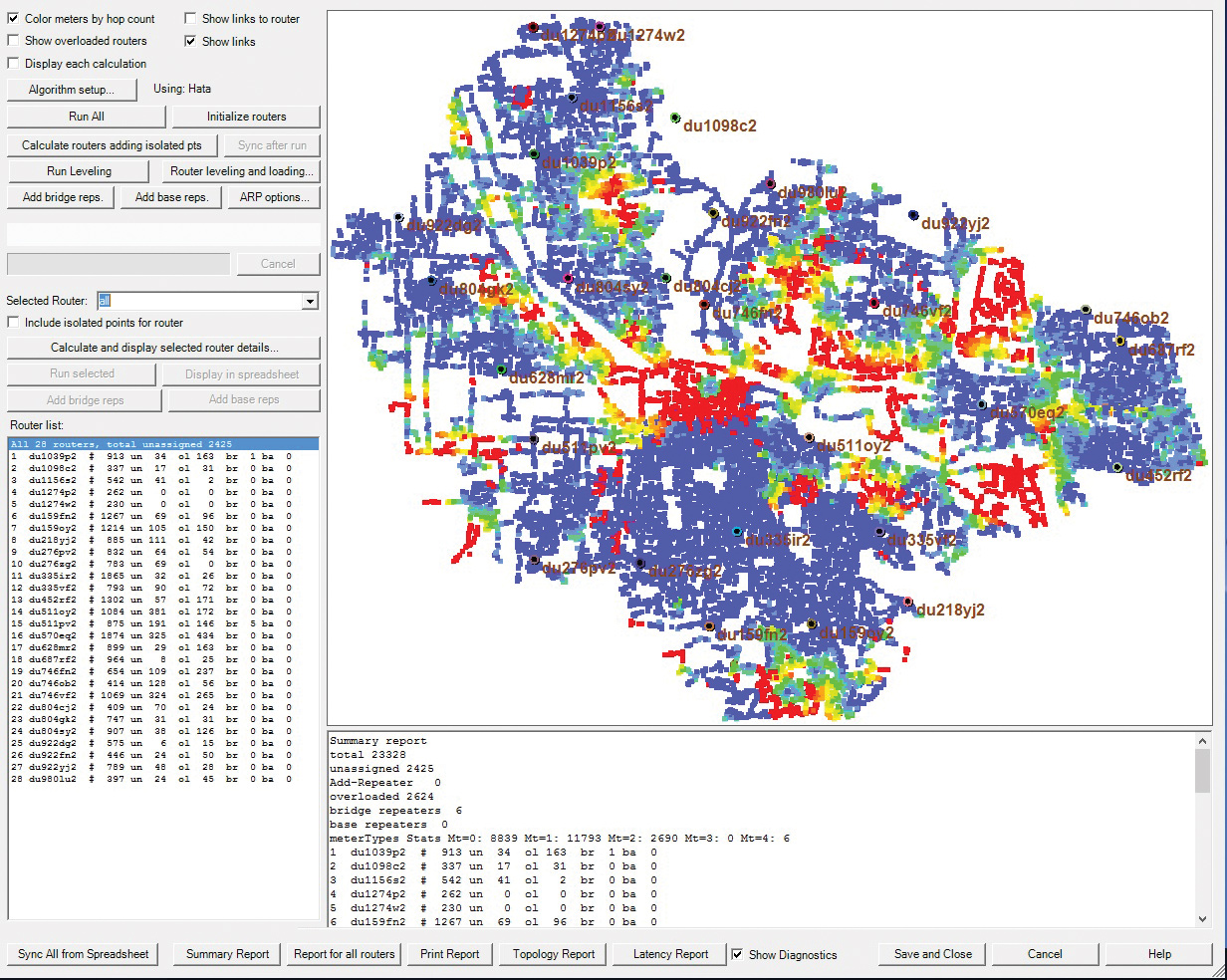

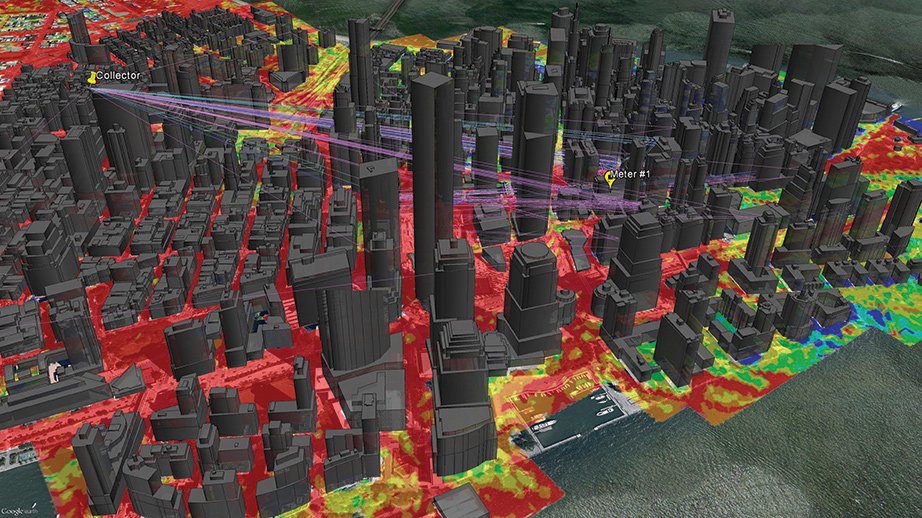

Node connectivity and traffic report

(click to enlarge)

A recent study by Forrester Consulting said that “47% of consumers expect a web page to load in two seconds or less…” The study went on to say that “40% of consumers will wait no more than three seconds for a web page to render before abandoning the site.” It is no secret that we demand instantaneous results. We expect an Instagram picture to post immediately, we want a Youtube video to start streaming the instant we click the play button, etc. The IoT will be no different and perhaps consumers will be even more impatient as the IoT encroaches more and more into our daily lives. Would a consumer, for example, be willing to wait for a light to come on after flipping the switch? We expect ‘real world’ things such as switching on a light to occur in ‘real time.’ Therefore if the IoT is to be a part of our “real world” we will expect results in ‘real time.’ Furthermore, we will demand the IoT to help improve and add efficiencies to the daily lives of consumers as well as increasing productivity in business and enterprise – something that will be difficult to achieve if our devices and apps are not responding in real time. And of course, mission critical applications such as public safety, military and disaster recovery will benefit greatly from the advantages of IoT – and these applications must have instantaneous results.

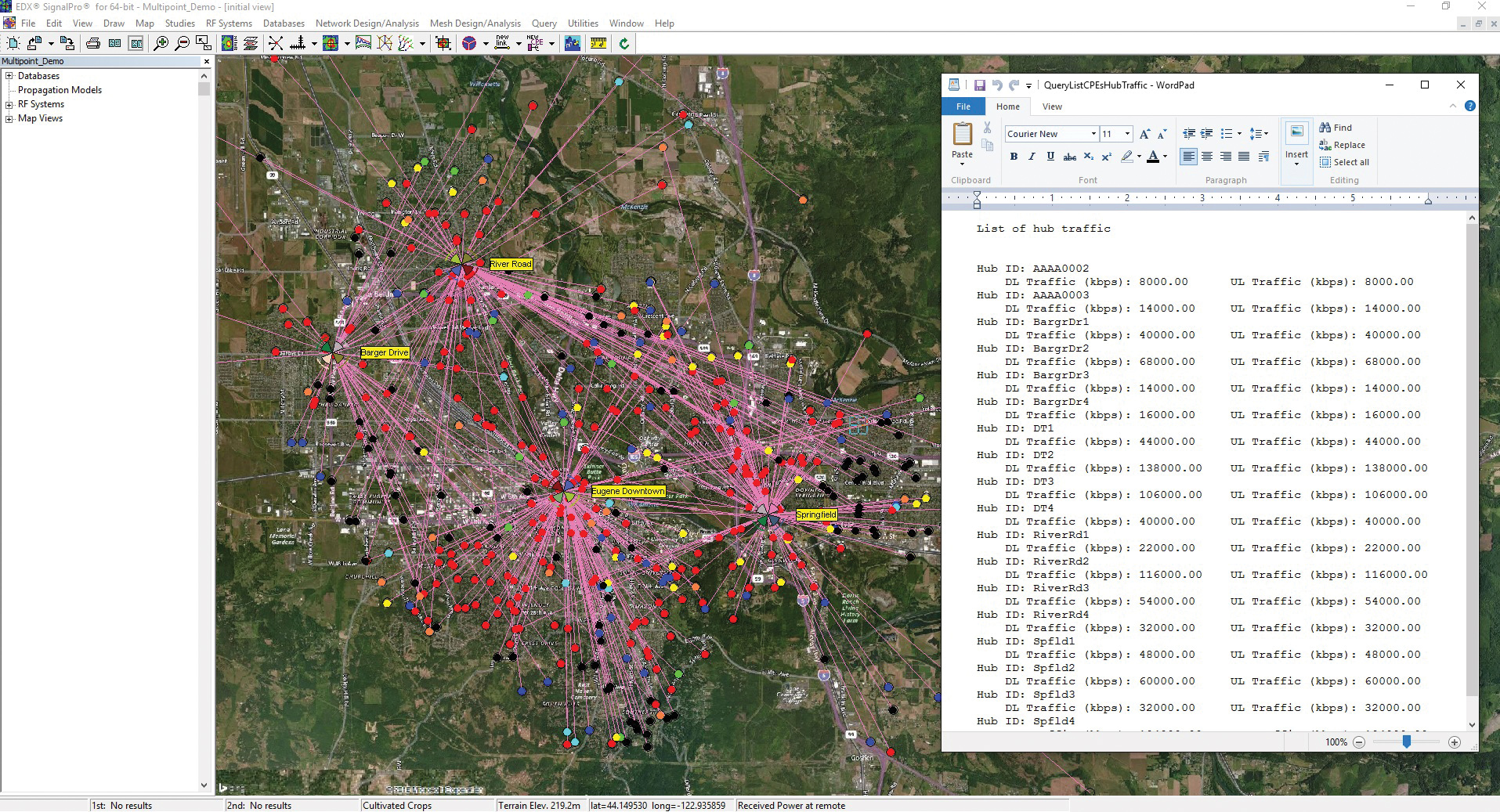

Base to hub links and traffic

(click to enlarge)

When we attempt to solve latency issues in these networks, one of the immediate things that comes to mind is the proximity of consumers to data and processing centers. While it is true that the location of data centers in relation to the consumers can either increase or decrease latency, we must be careful that we don’t arbitrarily start moving data centers based on geography alone.

Poorly located assets can lead to non-ideal data routing within the network, causing congestion and paradoxically increasing latency while attempting to alleviate it. But while reducing the distance data must travel is the most obvious factor in decreasing latency, it cannot be the only thing we consider.

We must also examine the scale of these systems, which cannot be determined from a strictly geographical proximity perspective. When we talk about an IoT network, we are talking about several systems for a large number of devices, all of which can be sending and receiving data, communicating under different protocols, in real-time, alongside already existing devices that are utilizing the internet. All of this data calls for a network that is intelligent enough to interpret said data and have the capability to route it properly. And all of this needs to happen instantly!

Such a network and its performance cannot be measured from a strictly hardware perspective based on technical specifications and pre-existing system parameters. An IoT network will need to be understood from a data analytics perspective. This includes real world data that provides traffic trends, averages, peak performances and traffic that may occur in emergency situations as well as analysis of the actual data that will flow through the network. Both of these types of datasets are instrumental for the successful planning and deploying of a network. Indeed, this data analytics approach may prove to be even more critical than the hardware perspective, since adding more devices to a system to accommodate more traffic can lead to further issues of scaling and traffic, not to mention an increase in cost. From a planning, performance and budget perspective, it will behoove engineers to ensure data is distributed within a network intelligently.

Ray tracing study

(click to enlarge)

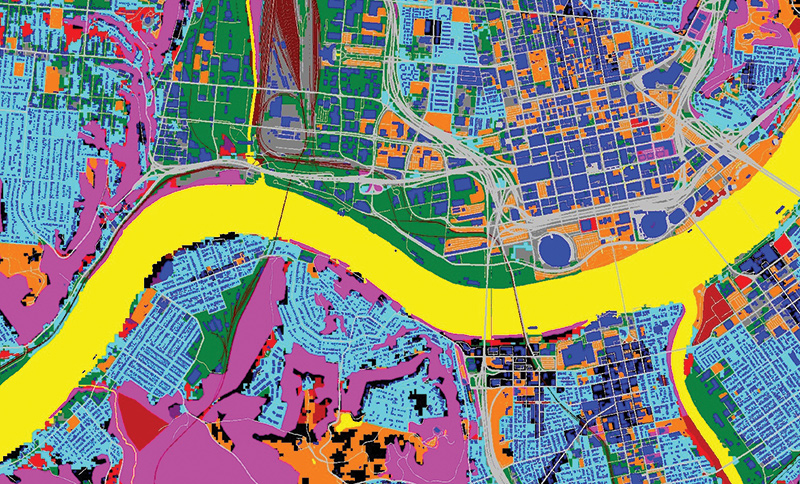

Fortunately the tools to support this approach are available – and this is where the proactive approach mentioned earlier comes into play. We can analyze system performance and properly plan a network even before deployment by taking into account various real world, as well as hypothetical factors. In doing so, scenarios that include any number of variables can be accounted for to ensure a properly dimensioned network. For example, one can use databases that allow for the creation of a 3D model for a given service area that includes geographical and physical characteristics such as land use consideration, terrain elevations and foliage. These databases and models can also include building information so that individual attributes of a given building, along with the other elements in our model, can provide a real-world visualization of a network’s performance before deployment. These models and calculations allow for the inclusion of utility poles and other asset mounting locations in order to help engineers determine the best physical locations of their assets and devices. Furthermore, exact hardware and mobile device specifications can be used in these calculations in order to determine preferential equipment as well as potential coverage gaps in a network.

The real value of such calculations and where we are most likely to alleviate latency issues prior to deployment, comes from the real-world usage models that can be created. For example, once we have created a model of a network with the service area information, the assets to be mounted and the mobile devices to be deployed... we can then model traffic through the network to determine the overall health of the network. Based on this model we can then ensure that: a network is passing data optimally, the network is redundant and will accommodate future expansions, and finally, there are no critical or weak spots in the system by analyzing the traffic going through each node. Of course this information can be used to ensure latency is minimal while modeling average traffic, peak traffic or other scenarios. Specific databases can be used in these models such as social media data that shows traffic over a determined amount of time in a given area to show where there may be traffic and where it is most likely to flow at any given time. We can also take into account various service types and adjust our network accordingly to accommodate both latency and bandwidth requirements. For example, in a public safety application in which low latency is a requirement but bandwidth may not be used as much as other applications, a network can be dimensioned specifically to the service behavior. By modeling various scenarios based upon the data available to us, engineers ensure a network is robust and redundant before deployment. Latency, coverage gaps and critical points are eliminated or minimized and network buildouts fall within budget forecasts.

High resolution model of service area

(click to enlarge)

We have grown accustomed to the instantaneous nature of the internet and our devices. Moving forward, we will have to develop and embrace new paradigms to ensure evolving networks such as IoT continue to meet the expectations of consumers, enterprise and mission critical applications. There is no doubt that as more of these networks are deployed there will be some growing pains. IoT networks may not be as efficient out of the gate as they will be 20 years from now and the overall health of these systems will, in no small part, be dependent on instrumentation that analyzes real-time performance once these systems are being utilized. However, a properly planned and dimensioned system before its deployment will help smooth the transition into an IoT world.

About the Author

Bob Akins is the sales and marketing manager at EDX Wireless. He is responsible for identifying and developing new opportunities across the wireless industry and actively contributes to not only sales and marketing, but also support and product development. In this role, Akins has worked with utilities, smart grid vendors and consultants world-wide as they plan and deploy AMI, distribution automation and other mesh networks. Mr. Akins joined EDX in 2012.

Bob Akins is the sales and marketing manager at EDX Wireless. He is responsible for identifying and developing new opportunities across the wireless industry and actively contributes to not only sales and marketing, but also support and product development. In this role, Akins has worked with utilities, smart grid vendors and consultants world-wide as they plan and deploy AMI, distribution automation and other mesh networks. Mr. Akins joined EDX in 2012.